Intel's Larrabee Architecture Disclosure: A Calculated First Move

by Anand Lal Shimpi & Derek Wilson on August 4, 2008 12:00 AM EST- Posted in

- GPUs

Thread and Data Management: It's Time to Blow Your Mind

With both the recent NIVIDA and AMD graphics hardware launches, we spent quite a bit of time talking about thread management. Since Larrabee is designed to be more of a collection of general purpose scalar and vector processing units, and vertex, primitive and pixel data (along with associate shader programs) are software managed. As we discussed what a context is for AMD and NVIDIA graphics hardware, a true context is going to be a different thing altogether on Larrabee.

We do have to make a point of saying before proceeding that NVIDIA and AMD are under no obligation to actually tell us how their architecture is physically implemented. It is entirely possible that much of the attributes of the hardware are not actually attributes of the hardware but simply reflections of how hardware resources are used. In recent discussions with both companies about certain realities of their hardware revealed to us that the belief is if the system behaves like a specific physical implementation then it effectively is the same as that physical implementation.

Of course, we disagree. And it is possible that some of this has more similarity with NVIDIA and AMD than they are letting on. But we'll go on what we've got for now, and assume that what Intel is doing is as divergent as it sounds.

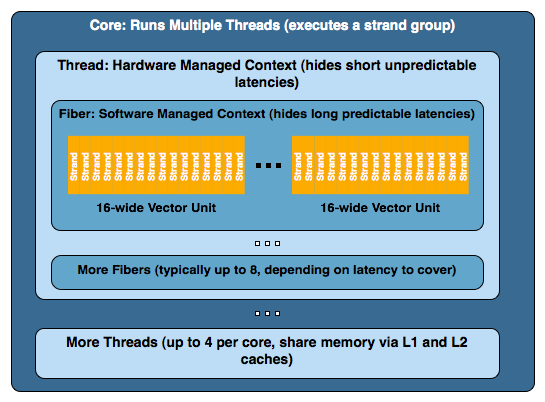

Each Larrabee core on a chip (of which it seems likely there will be some multiple of 8 in the final product) can maintain 4 simultaneous software threads (4 contexts are kept active at a time). This gives the appearance of 4 virtual physical processors to software running directly on the hardware even though all four threads are sharing a single resource. It is very likely that the major purpose of this is to hide some of the long latency we hit when going to memory for texture data and the like.

Now, for the purpose of graphics rendering using Intel's software rendering library or as it emulates DirectX and OpenGL, a thread is set up to manage the resources for a larger group of instructions and data that Intel calls a "fiber". Normally a thread will manage 8 fibers at a time. The hardware thread maintains a context in software for the fiber. The fiber's job is to manage the execution data parallel kernels on multiple groups of 16 "strands" (because the vector processor is 16-wide). A strand is what we have traditionally called a thread on other graphics hardware. The problem here is that Intel hardware is actually executing threads in a way that emulates hardware features of other architectures.

To put it together a little better, imagine one of Intel's threads as one of NVIDIA's TPCs, a fiber as an SM, and a strand as a thread. Okay, so it isn't that simple (simple?). But it is a sort of rough way of looking at it and a quick way of understand why naming is different here.

Let's take a deeper look at what goes on. With 4 threads per core (with at least 8 and hopefully something more like 32 cores), 8 fibers per thread, and some multiple of 16 strands per fiber, we could end up with a huge number of strands being managed simultaneously. This is active, running threads we are looking at as well. Since Larrabee will be a CPU in a true sense of the term, we can have as many threads as necessary live and waiting for a time slice. In the context of a normal CPU, this would be managed by the operating system, but as Larrabee will see the light of day as a graphics card, the driver will probably be managing timesharing issues an OS would normally perform.

While running ridiculous numbers of threads per core at a time might kill performance, unlike current GPUs, resource availability doesn't disrupt the creation of threads. Six of one, half dozen of the other? Maybe, and maybe not. Having active threads with data available to context switch to is key to hiding latency in NVIDIA and AMD hardware. If enough threads cannot be actively maintained, stalls happen and kill performance. Similar issues will impact Intel, and keeping dual-issue in-order hardware busy with multiple threads might be more easily managed if it can fall back on traditional CPU thread management paradigms to handle an abundance of threads that manage software that manages data parallel kernels.

101 Comments

View All Comments

del - Friday, August 15, 2008 - link

Don't be a hater. :P Intel has got it goin' on right now. Believe in the POWAH of Larrabee... unless it proves to be a failure upon release.:)

Thatguy97 - Sunday, June 28, 2015 - link

IM FROM TEH FUTURE LARRABAE WAS CANCELLED OMG XDDDDDatlmann10 - Saturday, August 9, 2008 - link

Think about this ok AMD originally was a private IBM cpu manufacturer. Then bought out and run as a side unit of INTEL, that was dropped after they were done with them. So in a way the were partners and I'm sure there was some friendliness. As it's always been said keep your friends close but your enemies closer. There have been some things especially in these past two years that struck me kind of odd. Such as AMD's graphics chips running fine on a x38/48 chipset and the physics collaboration things as well as a few other rumors. Then Nvidia starts spouting off about how they could kick INTELS A77 etc. Now AMD has a definite GPU coprocessor in ATI and they wanna break into the market of GPU's etc. They know that there will be graphics competition with Nvidia being there largest competitior because there dedicated to GPU's solely and have a reputation. However now AMD has some chips that compete straight on weakening Nvidia to a point. Then AMD is getting more and more out of there cpu's gpu's and chipsets so INTEl jumps in the CPU GPU market just like AMD. Either way it turns out more are going to go with INTEL cpu's and many other products where AMD is kind of a fringe player. Who would you rather compete against full on 2 major GPU manufacturers or attempt to kind of co-align yourself with there competetitor while the somewhat down. Then throw out a whole new way to do graphics that performs well Nvidia is already loosing market share. So more people try it and the same number of people go with ATI. That leaves a much lower market for Nvidia plus there paying back what some 200 million dollars in bad GPU's right now as well and a few other problems they been having. Now this is not anything I know but knowing INTEL loves to stick it to competitors when there weak think about it.benkantor - Wednesday, August 6, 2008 - link

if you could fit 10 Larrabees on 143 mm^2, you could fit 40 Larrabees on 286 mm^2, not 20... :PMamiyaOtaru - Saturday, August 9, 2008 - link

For the love of education. We've already been through this. See the end of page 6 through page 7 in the comments section.143mm^2 doesn't mean 143*143. It means 143 square millimeters. 286 square millimeters is twice as many, allowing twice as many cores.

http://img379.imageshack.us/my.php?image=squaremmh...">http://img379.imageshack.us/my.php?image=squaremmh...

The article is right and you are so very wrong.

Barack Obama - Wednesday, August 6, 2008 - link

Derek and Anand deliver again!KGR - Wednesday, August 6, 2008 - link

I am not a profeesional about software and hardware that is why maybe this question can sound nonsense .If larrabee will have a software renderer and programmed by C++ is it possible that it is not depended on windows? I mean if it doesnt need direct X can we run the games on Linux also??

npoe1 - Tuesday, August 5, 2008 - link

I enjoyed reading this so much. I think that this kind of articles is what Anandtech needs; I usually go to Arstechnica to read things like this one.Again, thanks!

TrEmEnDo - Tuesday, August 5, 2008 - link

I am definitely impressed with this new development and I expect that this technology will be disruptive down the road, however I feel that somehow they are about to commit another of their megalomaniac mistakes.Has anyone stopped for a sec and look where all gaming industry is heading into? Are PCs the future gaming platform? Maybe I am missing something but aren't the big guys already struggling to retain a 'decent' percentage of the multibillion gaming pie (PC gaming alliance anyone...)? I believe that whether us, tech enthusiast, hardcore pc gamers like it or not, it is the console arena where the big guns are going to be playing in a few years from now.

Guys, we are seeing this happening everyday, we see tittles appearing and disappearing everyday b/c companies don't want to commit the resources to develop games for more than one or two platforms (normally doing a sloppy work BTW). Now that the grandpas of graphic hardware had manage to get DX/D3D derived engines into the last gen consoles (xenos, RSX) and a terribly inertial and rigid developer community avoids and whines about how difficult is to program for the few hardware 'jewels' that we have already in our hands (Cell/RV770/G200) do you think anyone except Intel is in the mood for yet another graphics industry spin?

I have no doubt that this new development will have its own niche application or someone will definitely find something appropriate for it, but to say that Larrabee CAN do graphics and to say larrabee will kick ass so bad that in 3 years from now we all will be gaming from a Larrabee containing computer are two very different things.

Congrats to Intel as the fathers of the creature, and congrats to us to see the tech world moving on....but just don't think this will change the world as we know it.

hooflung - Tuesday, August 5, 2008 - link

They are doing something very AMD like and taking it a step further and tossing in a few Power ideals in. I just wonder what the power profile will look like and who will partner up with Intel for it.I am sure they will have 4+ of these cores built into integrated chip sets for OEMs and laptops to really boost those areas. And people who buy laptops will see that they can get a desktop with 'bigger larrabee' and play their games faster than their budget/laptop computer.

So it does make sense. However, it is an empire made on a lot of ifs. It will be fun to watch. Thanks anandtech for the informative article.