The AMD FreeSync Review

by Jarred Walton on March 19, 2015 12:00 PM ESTFreeSync vs. G-SYNC Performance

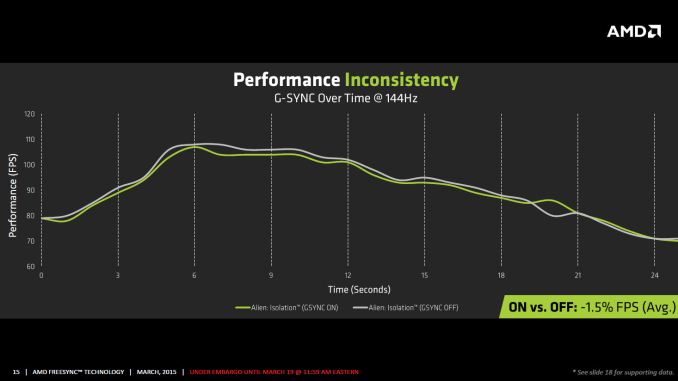

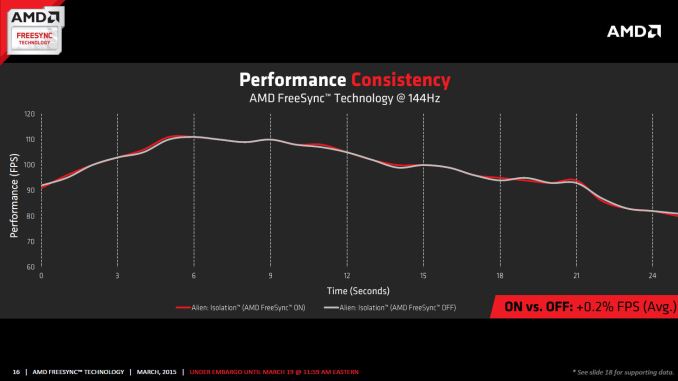

One item that piqued our interest during AMD’s presentation was a claim that there’s a performance hit with G-SYNC but none with FreeSync. NVIDIA has said as much in the past, though they also noted at the time that they were "working on eliminating the polling entirely" so things may have changed, but even so the difference was generally quite small – less than 3%, or basically not something you would notice without capturing frame rates. AMD did some testing however and presented the following two slides:

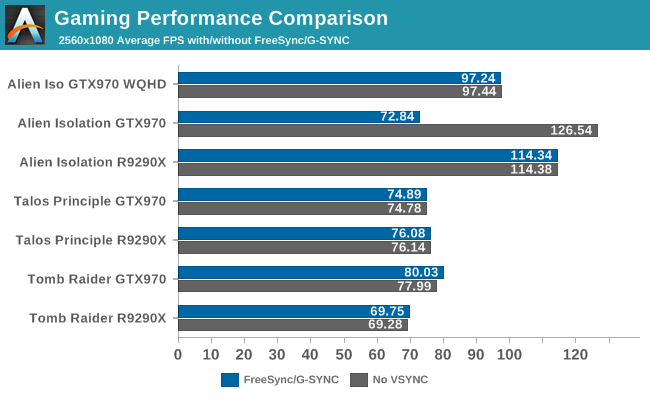

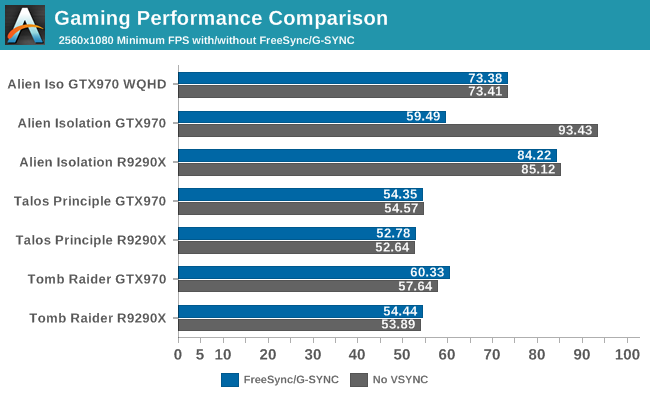

It’s probably safe to say that AMD is splitting hairs when they show a 1.5% performance drop in one specific scenario compared to a 0.2% performance gain, but we wanted to see if we could corroborate their findings. Having tested plenty of games, we already know that most games – even those with built-in benchmarks that tend to be very consistent – will have minor differences between benchmark runs. So we picked three games with deterministic benchmarks and ran with and without G-SYNC/FreeSync three times. The games we selected are Alien Isolation, The Talos Principle, and Tomb Raider. Here are the average and minimum frame rates from three runs:

Except for a glitch with testing Alien Isolation using a custom resolution, our results basically don’t show much of a difference between enabling/disabling G-SYNC/FreeSync – and that’s what we want to see. While NVIDIA showed a performance drop with Alien Isolation using G-SYNC, we weren’t able to reproduce that in our testing; in fact, we even showed a measurable 2.5% performance increase with G-SYNC and Tomb Raider. But again let’s be clear: 2.5% is not something you’ll notice in practice. FreeSync meanwhile shows results that are well within the margin of error.

What about that custom resolution problem on G-SYNC? We used the ASUS ROG Swift with the GTX 970, and we thought it might be useful to run the same resolution as the LG 34UM67 (2560x1080). Unfortunately, that didn’t work so well with Alien Isolation – the frame rates plummeted with G-SYNC enabled for some reason. Tomb Raider had a similar issue at first, but when we created additional custom resolutions with multiple refresh rates (60/85/100/120/144 Hz) the problem went away; we couldn't ever get Alien Isolation to run well with G-SYNC using our custome resolution, however. We’ve notified NVIDIA of the glitch, but note that when we tested Alien Isolation at the native WQHD setting the performance was virtually identical so this only seems to affect performance with custom resolutions and it is also game specific.

For those interested in a more detailed graph of the frame rates of the three runs (six total per game and setting, three with and three without G-SYNC/FreeSync), we’ve created a gallery of the frame rates over time. There’s so much overlap that mostly the top line is visible, but that just proves the point: there’s little difference other than the usual minor variations between benchmark runs. And in one of the games, Tomb Raider, even using the same settings shows a fair amount of variation between runs, though the average FPS is pretty consistent.

350 Comments

View All Comments

P39Airacobra - Monday, March 23, 2015 - link

Why will it not work with the R9 270? That is BS! To hell with you AMD! I paid good money for my R9 series card! And it was supposed to be current GCN not GCN 1.0! Not only do you have to deal with crap drivers that cause artifacts! Now AMD is pulling off marketing BS!Morawka - Tuesday, March 24, 2015 - link

Anandtech, have you seen the PCPerspective article on Gsync vs Freesync? PCper was seeing ghosting with freesync. Can you guys coo-berate their findings?shadowjk - Tuesday, March 24, 2015 - link

Am I the only one who would want a 24" ish 1080p IPS screen with gsync or freesync?xenol - Tuesday, March 24, 2015 - link

FreeSync and GSync shouldn't have ever happened.The problem I have is "syncing" is a relic of the past. The only reason why you needed to sync with a monitor is because they were using CRTs that could only trace the screen line by line. It just kept things simpler (or maybe practical) if you weren't trying to fudge with the timing of that on the fly.

Now, you can address each individual pixel. There's no need to "trace" each line. DVI should've eliminated this problem because it was meant for LCD's. But no, in order to retain backwards compatibility, DVI's data stream behaves exactly like VGA's. DisplayPort finally did away with this by packetizing the data, which I hope means that display controllers only change what they need to change, not "refresh" the screen. But given they still are backwards compatible with DVI, I doubt that's the case.

Get rid of the concept of refresh rates and syncing altogether. Stop making digital displays behave like CRTs.

Mrwright - Wednesday, March 25, 2015 - link

Why do i need either Freesync or Gsync when I already get over 100fps in all games at 2560x1400. All i want is a 144Hz 2560x1440 monitor without the Gsync tax. as gsync and freesync are only usefull if you drop below 60fps.ggg000 - Thursday, March 26, 2015 - link

Freesync is a joke:https://www.youtube.com/watch?feature=player_embed...

https://www.youtube.com/watch?v=VJ-Pc0iQgfk&fe...

https://www.youtube.com/watch?v=1jqimZLUk-c&fe...

https://www.youtube.com/watch?feature=player_embed...

https://www.youtube.com/watch?v=84G9MD4ra8M&fe...

https://www.youtube.com/watch?v=aTJ_6MFOEm4&fe...

https://www.youtube.com/watch?v=HZtUttA5Q_w&fe...

ghosting like hell.

willis936 - Tuesday, August 25, 2015 - link

LCD is a memory array. If you don't use it you lose it. Need to physically refresh each pixel the same number of times a second. You could save on average bitrate by only sending changed pixels but that requires more work on the gpu and adds latency. What's more is it doesn't change the fact what your max bitrate needs to be and don't even bigger suggesting multiple frame buffers as that adds TV tier latency.ggg000 - Thursday, March 26, 2015 - link

Freesync is a joke:https://www.youtube.com/watch?feature=player_embed...

https://www.youtube.com/watch?v=VJ-Pc0iQgfk&fe...

https://www.youtube.com/watch?v=1jqimZLUk-c&fe...

https://www.youtube.com/watch?feature=player_embed...

https://www.youtube.com/watch?v=84G9MD4ra8M&fe...

https://www.youtube.com/watch?v=aTJ_6MFOEm4&fe...

https://www.youtube.com/watch?v=HZtUttA5Q_w&fe...

ghosting like hell.

chizow - Monday, March 30, 2015 - link

And more evidence of FreeSync's (and AnandTech's) shortcomings, again from PCPer. I remember a time AnandTech was willing to put in the work with the kind of creativeness needed to come to such conclusions, but I guess this is what happens when the boss retires and takes a gig with Apple.http://www.pcper.com/reviews/Graphics-Cards/Dissec...

PCPer is certainly the go-to now for any enthusiast that wants answers beyond the superficial spoon-fed vendor stories.

ZmOnEy132 - Saturday, December 17, 2016 - link

Free sync is not meant to increase fps. The whole point is visuals. It stops visual tearing which is why it drops frame rates to match the monitor. Fps has no effect on what free sync is meant to do. It's all visuals not performance. I hate when people write reviews that don't know what they're talking about. You're gonna get dropped frame rates because that means the frame isn't ready yet so the GPU doesn't give it to the display and holds onto it a tiny bit longer to make sure the monitor and GPU are both ready for that frame.