NVIDIA's Fermi: Architected for Tesla, 3 Billion Transistors in 2010

by Anand Lal Shimpi on September 30, 2009 12:00 AM EST- Posted in

- GPUs

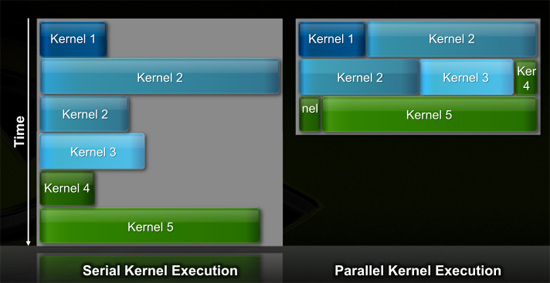

Efficiency Gets Another Boon: Parallel Kernel Support

In GPU programming, a kernel is the function or small program running across the GPU hardware. Kernels are parallel in nature and perform the same task(s) on a very large dataset.

Typically, companies like NVIDIA don't disclose their hardware limitations until a developer bumps into one of them. In GT200/G80, the entire chip could only be working on one kernel at a time.

When dealing with graphics this isn't usually a problem. There are millions of pixels to render. The problem is wider than the machine. But as you start to do more general purpose computing, not all kernels are going to be wide enough to fill the entire machine. If a single kernel couldn't fill every SM with threads/instructions, then those SMs just went idle. That's bad.

GT200 (left) vs. Fermi (right)

Fermi, once again, fixes this. Fermi's global dispatch logic can now issue multiple kernels in parallel to the entire system. At more than twice the size of GT200, the likelihood of idle SMs went up tremendously. NVIDIA needs to be able to dispatch multiple kernels in parallel to keep Fermi fed.

Application switch time (moving between GPU and CUDA mode) is also much faster on Fermi. NVIDIA says the transition is now 10x faster than GT200, and fast enough to be performed multiple times within a single frame. This is very important for implementing more elaborate GPU accelerated physics (or PhysX, great ;)…).

The connections to the outside world have also been improved. Fermi now supports parallel transfers to/from the CPU. Previously CPU->GPU and GPU->CPU transfers had to happen serially.

415 Comments

View All Comments

AtwaterFS - Wednesday, September 30, 2009 - link

4 reals - this dude is clearly an Nvidia shill.Question is, do you really want to support a company that routinely supports this propaganda blitz on the comments of every Fn GPU article?

It just feels dirty doesn't it?

strikeback03 - Thursday, October 1, 2009 - link

I doubt SiliconDoc is actually paid by nvidia, I've met people like this in real life who just for some reason feel a need to support one company fanatically.Or he just enjoys ticking others off. One of my friends while playing Call of Duty sometimes just runs around trying to tick teammates off and get them to shoot back at him.

SiliconDoc - Thursday, October 1, 2009 - link

If facing the truth and the facts makes you mad, it's your problem, and your fault.I certainly know of people like you describe, and let's face it, it is one of YOUR TEAMMATES---

--

Now, when you collective liars and deniars counter one of my pointed examples, you can claim something. Until then, you've got nothing.

And those last 3 posts, yours included, have nothing, except in your case, it shows what you hang with, and that pretty much describes the lies told by the ati fans, and how they work.

I have no doubt pointing them out "ticks them off".

The simple fix is, stop lying.

Yangorang - Wednesday, September 30, 2009 - link

Honestly all I want to know is:When will it launch? (as in be available for actual purchase)

How much will it cost?

Will this beast even fit into my case...and how much power will it use?

How will it perform? (particularly I'm wondering about DX11 games...as it seems to be very much a big deal for ATI)

but heh none of these questions will be answered for a while I guess....

I'm also kinda wondering about:

How does the GT300 handle tessellation?

Does it feature Angle-Independent Anisotropic Filtering?

I could really couldn't give a crap less about using my GPU for general computing purposes....I just want to play some good looking games without breaking the bank...

haukionkannel - Thursday, October 1, 2009 - link

Well it's going to be DX11 card, so it can handle tessalation. How well? That remains to be seen, but there is enough computing power to do it guite nicely.But the big guestion is not, if the GT300 is faster than 5870 or not, It most propably is, but how much and how much it does cost...

If you can buy two 5870 for the prize of GT300, it has to be really fast!

Interesting release and good article to reveal the architecture behind this chip. I am sure, that we will see more new around the release of Win7, even if the card is not released until 2010. Just to make sure, that not too many "potential" customers does not buy ATI made card by that time.

Allso as someone said before this seams to be guite modular, so it's possible to see some cheaper cut down versions allso. We need competition to low and middle range allso. Can G300 design do it reamains to be seeing.

SiliconDoc - Thursday, October 1, 2009 - link

Well, that brings to mind another anandtech LIE.--

In the 5870 article text post area, the article writer and tester, responded to a query by one of the fans, and claimed the 5870 is "the standard 10.5 " .

Well, it is NOT. It is OVER 11", and it is longer than the 285, by a bit.

So, I just have to shake my head, and no one should have wonder why. Even lying about the length of the ati card. It is nothing short of amazing.

silverblue - Thursday, October 1, 2009 - link

http://vr-zone.com/articles/sapphire-ati-radeon-hd...They say 10.5".

SiliconDoc - Thursday, October 1, 2009 - link

I'm sorry, I realize I left with you in the air, since you're so convinced I don't know what I'm talking about." The card that we will be showing you today is the reference Radeon HD 5870, which is a dual-slot graphics card that measures in at 11.1" in length. "

http://www.legitreviews.com/article/1080/2/">http://www.legitreviews.com/article/1080/2/

I mean really, you should have given up a long time ago.

silverblue - Friday, October 2, 2009 - link

Anand, could you or Ryan come back to us with the exact length of the reference 5870, please? I know Ryan put 10.5" in the review but I'd like to be sure, please.It's best to check with someone who actually has a card to measure.

silverblue - Friday, October 2, 2009 - link

You know something? I'm just going to back down and say you're right. You might just be, but I couldn't give a damn anymore.