AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

Eyefinity

Somewhere around 2006 - 2007 ATI was working on the overall specifications for what would eventually turn into the RV870 GPU. These GPUs are designed by combining the views of ATI's engineers with the demands of the developers, end-users and OEMs. In the case of Eyefinity, the initial demand came directly from the OEMs.

ATI was working on the mobile version of its RV870 architecture and realized that it had a number of DisplayPort (DP) outputs at the request of OEMs. The OEMs wanted up to six DP outputs from the GPU, but with only two active at a time. The six came from two for internal panel use (if an OEM wanted to do a dual-monitor notebook, which has happened since), two for external outputs (one DP and one DVI/VGA/HDMI for example), and two for passing through to a docking station. Again, only two had to be active at once so the GPU only had six sets of DP lanes but the display engines to drive two simultaneously.

ATI looked at the effort required to enable all six outputs at the same time and made it so, thus the RV870 GPU can output to a maximum of six displays at the same time. Not all cards support this as you first need to have the requisite number of display outputs on the card itself. The standard Radeon HD 5870 can drive three outputs simultaneously: any combination of the DVI and HDMI ports for up to 2 monitors, and a DisplayPort output independent of DVI/HDMI. Later this year you'll see a version of the card with six mini-DisplayPort outputs for driving six monitors.

It's not just hardware, there's a software component as well. The Radeon HD 5000 series driver allows you to combine all of these display outputs into one single large surface, visible to Windows and your games as a single display with tremendous resolution.

I set up a group of three Dell 24" displays (U2410s). This isn't exactly what Eyefinity was designed for since each display costs $600, but the point is that you could group three $200 1920 x 1080 panels together and potentially have a more immersive gaming experience (for less money) than a single 30" panel.

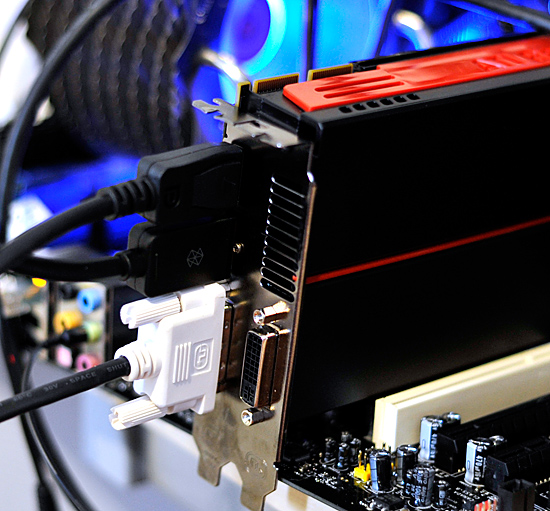

For our Eyefinity tests I chose to use every single type of output on the card, that's one DVI, one HDMI and one DisplayPort:

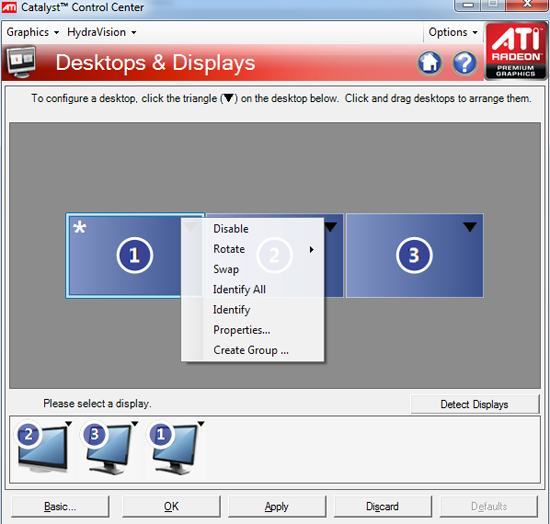

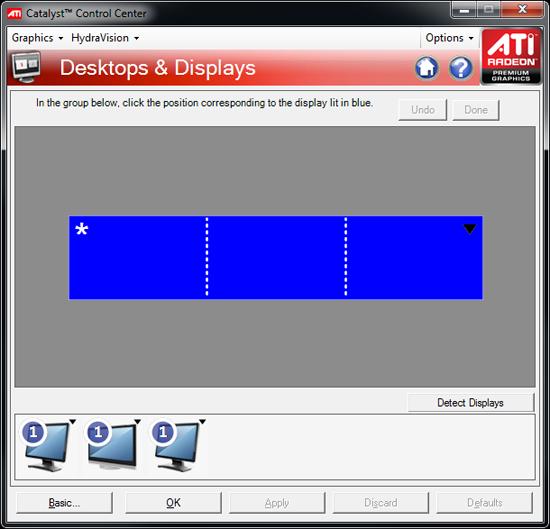

With all three outputs connected, Windows defaults to cloning the display across all monitors. Going into ATI's Catalyst Control Center lets you configure your Eyefinity groups:

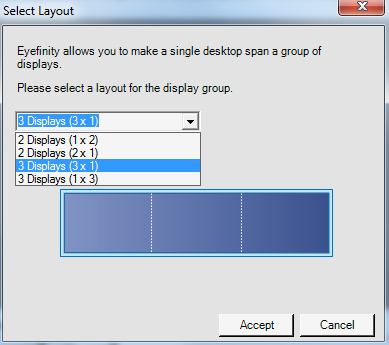

With three displays connected I could create a single 1x3 or 3x1 arrangement of displays. I also had the ability to rotate the displays first so they were in portrait mode.

You can create smaller groups, although the ability to do so disappeared after I created my first Eyefinity setup (even after deleting it and trying to recreate it). Once you've selected the type of Eyefinity display you'd like to create, the driver will make a guess as to the arrangement of your panels.

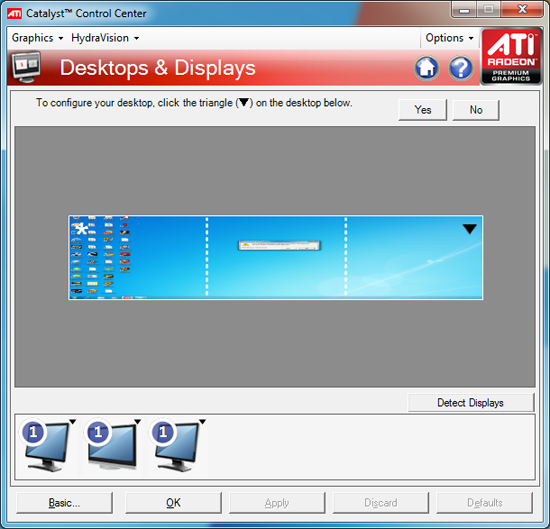

If it guessed correctly, just click Yes and you're good to go. Otherwise ATI has a handy way of determining the location of your monitors:

With the software side taken care of, you now have a Single Large Surface as ATI likes to call it. The display appears as one contiguous panel with a ridiculous resolution to the OS and all applications/games:

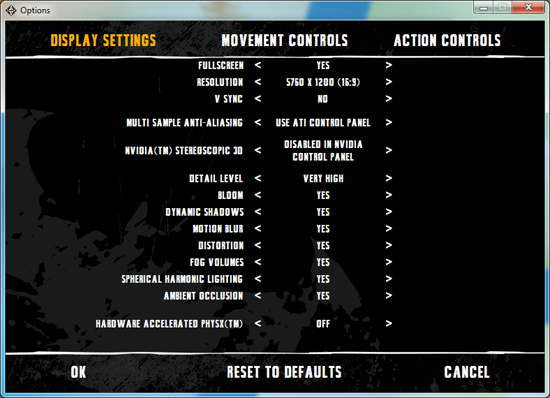

Three 24" panels in a row give us 5760 x 1200

The screenshot above should clue you into the first problem with an Eyefinity setup: aspect ratio. While the Windows desktop simply expands to provide you with more screen real estate, some games may not increase how much you can see - they may just stretch the viewport to fill all of the horizontal resolution. The resolution is correctly listed in Batman Arkham Asylum, but the aspect ratio is not (5760:1200 !~ 16:9). In these situations my Eyefinity setup made me feel downright sick; the weird stretching of characters as they moved towards the outer edges of my vision left me feeling ill.

Dispite Oblivion's support for ultra wide aspect ratio gaming, by default the game stretches to occupy all horizontal resolution

Other games have their own quirks. Resident Evil 5 correctly identified the resolution but appeared to maintain a 16:9 aspect ratio without stretching. In other words, while my display was only 1200 pixels high, the game rendered as if it were 3240 pixels high and only fit what it could onto my screens. This resulted in unusable menus and a game that wasn't actually playable once you got into it.

Games with pre-rendered cutscenes generally don't mesh well with Eyefinity either. In fact, anything that's not rendered on the fly tends to only occupy the middle portion of the screens. Game menus are a perfect example of this:

There are other issues with Eyefinity that go beyond just properly taking advantage of the resolution. While the three-monitor setup pictured above is great for games, it's not ideal in Windows. You'd want your main screen to be the one in the center, however since it's a single large display your start menu would actually appear on the leftmost panel. The same applies to games that have a HUD located in the lower left or lower right corners of the display. In Oblivion your health, magic and endurance bars all appear in the lower left, which in the case above means that the far left corner of the left panel is where you have to look for your vitals. Given that each panel is nearly two feet wide, that's a pretty far distance to look.

The biggest issue that everyone worried about was bezel thickness hurting the experience. To be honest, bezel thickness was only an issue for me when I oriented the monitors in portrait mode. Sitting close to an array of wide enough panels, the bezel thickness isn't that big of a deal. Which brings me to the next point: immersion.

The game that sold me on Eyefinity was actually one that I don't play: World of Warcraft. The game handled the ultra wide resolution perfectly, it didn't stretch any content, it just expanded my viewport. With the left and right displays tilted inwards slightly, WoW was more immersive. It's not so much that I could see what was going on around me, but that whenever I moved forward I I had the game world in more of my peripheral vision than I usually do. Running through a field felt more like running through a field, since there was more field in my vision. It's the only example where I actually felt like this was the first step towards the holy grail of creating the Holodeck. The effect was pretty impressive, although costly given that I only really attained it in a single game.

Before using Eyefinity for myself I thought I would hate the bezel thickness of the Dell U2410 monitors and I felt that the experience wouldn't be any more engaging. I was wrong on both counts, but I was also wrong to assume that all games would just work perfectly. Out of the four that I tried, only WoW worked flawlessly - the rest either had issues rendering at the unusually wide resolution or simply stretched the content and didn't give me as much additional viewspace to really make the feature useful. Will this all change given that in six months ATI's entire graphics lineup will support three displays? I'd say that's more than likely. The last company to attempt something similar was Matrox and it unfortunately didn't have the graphics horsepower to back it up.

The Radeon HD 5870 itself is fast enough to render many games at 5760 x 1200 even at full detail settings. I managed 48 fps in World of Warcraft and a staggering 66 fps in Batman Arkham Asylum without AA enabled. It's absolutely playable.

327 Comments

View All Comments

mapesdhs - Saturday, September 26, 2009 - link

> That is quite all right, you fellas make sure to read it all, ...

But that's the thing S.D., I pretty much don't read any of it. :D (does

anyone?) First sentence only, then move on.

Ian.

SiliconDoc - Monday, September 28, 2009 - link

Oh, ha ha, another lowlife smart aleck.One has to wonder if you do as you say, and only read the first sentence, and move on, why you would care what I've typed, since you cannot imagine anyone does anything different. Heck you shouldn't even notice this, right liar ?

Yes, another liar, not amazing, not at all.

No need to modify or delete the sentence prior to this JaredWalton, smarty pants insulter won't read it, but I'm sure you can't resist, for "convenience's" sake of course.

Oh, I don't have to bring anything up on topic at all, because neither did lowlife skum not reading, he just got his nose awfully browner.

JarredWalton - Friday, September 25, 2009 - link

Very happy to have everyone here convinced you don't know what you're talking about? That's the only "truth" you've brought to this party. Marketing generally wants reputable people to promote a product - the "every man" approach. Funny that we don't see crazy people espousing products on TV (well, excepting stuff like Sham Wow!)Being crazy like you are in this thread only cements your status as someone who doesn't have a firm grip on reality - someone that can't be trusted. Thanks again for clearing that up so thoroughly.

I am very happy about it as well! :-D

erple2 - Tuesday, September 29, 2009 - link

Yeah, but that "Sham Wow" product works like a freakin' charm...http://www.popularmechanics.com/blogs/home_journal...">http://www.popularmechanics.com/blogs/home_journal...

SiliconDoc - Wednesday, September 30, 2009 - link

Im' sure you spend your time drooling in front of a TV after you spank your joystick for fps, so know all about wacky commercials you have memorized, and besides, it's a pathetic, all you have left insult, off topic, who cares, pure hatred, no real response, and the 5870 double epic fail IS THE HOTTEST ATI CARD OF ALL TIME!erple2 - Wednesday, September 30, 2009 - link

What's with the personal attacks? Does that mean that you concede defeat?Meh, you've no more credibility. Chill out.

SiliconDoc - Friday, September 25, 2009 - link

Yeah, now down to insults, since you lost everything else.Let's have your claimed specialty outlined here in context, let's have you come clean on LAPTOP GRAPHICS, and spread the truth about how NVIDIA is so far ahead and has been for quite some time, that it's a JOKE to buy a gaming laptop with ATI graphics on board.

Come on mmister!

--

Now that is REALLY FUNNY ! You grabbed your arrogant unscrupulous self and proclaimed your fairness, but picked a spot where ati is completely EPIC FAIL, and NVIDIA is 1000% the only way to go, PERIOD, and left that MAJOR slap in the face high and dry.

--

Great job, yeah, you're the "sane one".

LOL

dieselcat18 - Saturday, October 3, 2009 - link

Nvidia fan-boy, troll, loser....take your gforce cards and go home...we can now all see how terrible ATi is thanks you ...so I really don't understand why people are beating down their doors for the 5800 series, just like people did for the 4800 and 3800 cards. I guess Nvidia fan-boy trolls like you have only one thing left to do and that's complain and cry like the itty-bitty babies that some of you are about the competition that's beating you like a drum.....so you just wait for your 300 series cards to be released (can't wait to see how many of those are available) so you can pay the overpriced premiums that Nvidia will be charging AGAIN !...hahaha...just like all that re-badging BS they pulled with the 9800 and 200 cards...what a joke !.. Oh my, I must say you have me in a mood and the ironic thing is I do like Nvidia as much as ATi, I currently own and use both. I just can't stand fools like you who spout nothing but mindless crap while waving your team flag (my card is better than your's..WhaaWhaaWhaa)...just take yourself along with your worthless opinions and slide back under that slimly rock you came from.JarredWalton - Friday, September 25, 2009 - link

You've been insulting in this whole thread, so don't go crying to mamma about someone pointing that out. I did go and delete the posts from the person calling you gay and suggesting you should die in various ways, because as bad as you've been you haven't stooped quite that low (yet).Laptop issues with ATI... you mean http://www.anandtech.com/mobile/showdoc.aspx?i=356...">like this. Granted, I gave them a chance to address the issues. They failed and my full article on the various Clevo high-end notebooks will make it quite clear how far ahead NVIDIA is in the mobile sector right now.

"Fair" is treating both sides objectively. ATI has major problems with getting updated graphics drivers out on mobile products, and that's horrible. On the desktop, they don't have such issues for the most part. Yeah, you might have to wait a month or so for a driver update to fix the latest hot release and add CrossFire support... but you have to do the exact same thing for NVIDIA with about the same frequency. Only SLI and CF setups really need the regular driver updates, and in many cases the latest 18x and 19x NVIDIA drivers are slower than 16x and 17x on games that are older than six months.

Fair is also looking at these results and saying, "gee, I can get a 5870 for $400 (or $360 if you wait a few weeks for supply to bolster up), and that same card has no CrossFire or SLI wonkiness and costs less than the GTX 295 and 4870X2. Okay, 4870X2 and GTX 295 beat it in raw performance in some cases, but I don't think there's a single game where you can say one HD 5870 offers less than acceptable performance at 2560x1600, and I can guarantee there are titles that still have issues with SLI and CrossFire. (Yeah, you need to turn down some details in Crysis to get acceptable performance, but that's true of anything other than the top SLI and CF configs.) I would be more than happy to give up a bit of performance to avoid dealing with the whole multi-GPU ordeal. Why don't you tell us how innovative and awesome tri-SLI and quad-SLI are while you're at it?

At present, you have contributed more than 20% of the comments to this article, and not a single one has been anything but trolling. Screaming and yelling, insulting others, lying and making stuff up, all in support of a company that is just like any other big company. We don't ban accounts often, but you've more than warranted such action.

SiliconDoc - Friday, September 25, 2009 - link

I think it is more than absolutely clear, that in fact, I said my peace, my first post, and was absolutely attacked. I didn't attack, I got attacked, and in fact you have done plenty of attacking as well.I have also provided links, to back up my assertions and counter arguments, added the text for easy viewing, and pointed out in very specific detail whay issues with bias I had and why.

--

Now you've claimed "all I've done is post FUD".

It is nothing short of amazing for you to even posit that, however I can certainly understand anyone pointing out the obvious bias problems (in the article no less) is "on thin ice", and after getting attacked, is solely blamed for "no facts".

---

I certainly won't disagree that the 5870 is a good value as appearing if especially if you don't like to deal with 2 cards or 2 cores.

But my posts never claimed otherwise. I first claimed it was not as good as wanted, was disappointing, and therefore was not the end of what ati had in store.

Since I have posted on the 5890, which will in fact be 512 bit.

Now, you don't like losing your points, or someone adept enough, smart enough, and accurate enough to counter them.

Sorry about that, and sorry that I won't just lay down, as more heaps are shovelled my way.

You skip my actual points, and go some other tangent.

1. PhysX is an advantage and best implementation so far.

your response: "It sucks because only 2 games ar available"

---

Is that correct for you to do ? Is it not the very best so far ? Yes, it is in fact.

I have remained factual and reasonable, and glad enough to throw back when I'm attacked.

But the fact remains, I have made absolutely solid 100% poijnts no matter how many times you claim " lying and making stuff up, "

---

Yet of course, what I just said about NVIDIA and laptp chips, you agreed with. So accoring to your own characterization (quite unfair), all you do is scream and lie, too.

Just wonderful.

The GT300 is going to blow this 5870 away - the stats themselves show it, and betting otherwise is a bad joke, and if sense is still about, you know it as well.