AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

The Race is Over: 8-channel LPCM, TrueHD & DTS-HD MA Bitstreaming

It's now been over a year since I first explained the horrible state of Blu-ray audio on the PC. I'm not talking about music discs, but rather the audio component of any Blu-ray movie. It boils down to this: without an expensive sound card it's impossible to send compressed Dolby TrueHD or DTS-HD Master Audio streams from your HTPC to an AV receiver or pre-processor. Thankfully AMD, Intel and later NVIDIA gave us a stopgap solution that allowed HTPCs, when equipped with the right IGP/GPU, to decode those high-definition audio streams and send them uncompressed over HDMI. The feature is commonly known as 8-channel LPCM support and without it all high end HTPC users would be forced into spending another $150 - $250 on a sound card like the Auzentech HomeTheater HD I just recently reviewed.

For a while I'd heard that ATI was dropping 8-channel LPCM support from RV870 because of cost issues. Thankfully, those rumors turned out to be completely untrue. Not only does the Radeon HD 5870 support 8-channel LPCM output over HDMI like its predecessor, but it can now also bitstream Dolby TrueHD and DTS-HD MA. It is the first and only video card to be able to do this, but I expect others to follow over the next year.

The Radeon HD 5870 is first and foremost a card for gamers, so unless you're building a dual-purpose HTPC, this isn't the one you're going to want to use. If you can wait, the smaller derivatives of the RV870 core will also have bitstreaming support for TrueHD/DTS-HD MA. If you can't and have a deep enough HTPC case, the 5870 will work.

In addition to full bitstreaming support, the 5870 also features ATI's UVD2 (Universal Video Decoder). The engine allows for complete hardware offload of all H.264, MPEG-2 and VC1 decoding. There haven't been many changes to the UVD2 engine; you can still run all of the color adjusting post-processing effects and accelerate a maximum of two 1080p streams at the same time.

ATI claims that the GPU now supports Blu-ray playback/acceleration in Aero mode, but I found that in my testing the UI still defaulted to basic mode.

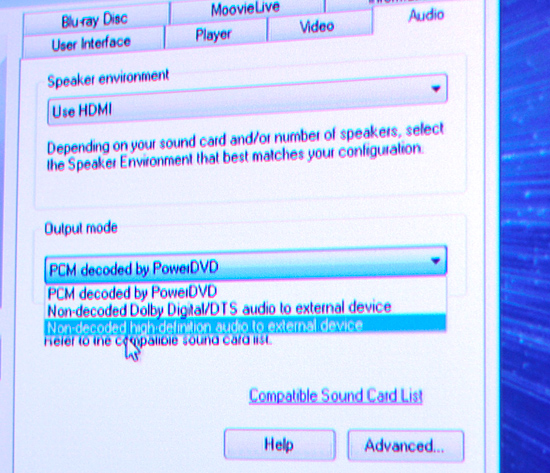

To take advantage of the 5870's bitstreaming support I had to use a pre-release version of Cyberlink's PowerDVD 9. The public version of the software should be out in another week or so. To enable TrueHD/DTS-HD MA bitstreaming you have to select the "Non-decoded high-definition audio to external device" option in the audio settings panel:

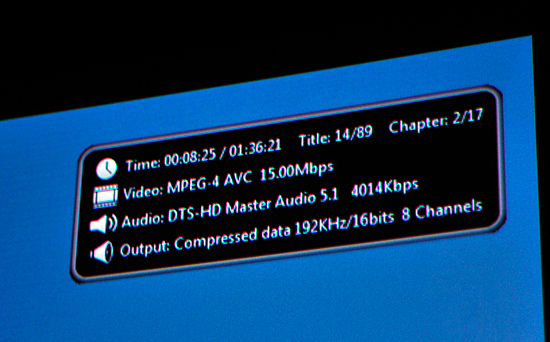

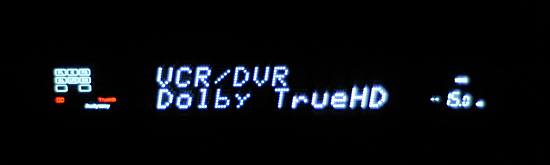

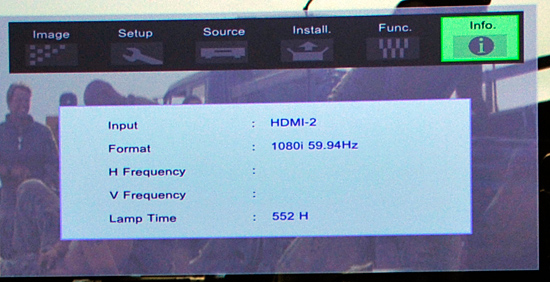

With that selected the player won't attempt to decode any audio but rather pass the encoded stream over HDMI to your receiver. In this case I had an Integra DTC-9.8 on the other end of the cable and my first test was Bolt, a DTS-HD MA title. Much to my amazement, it worked on the first try:

No HDPC errors, no strange player issues, nothing - it just worked.

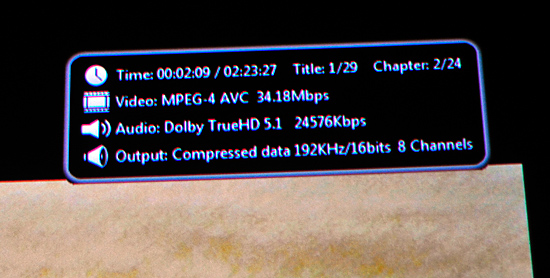

Next up was Dolby TrueHD. I tried American History X first but the best I could get out of it was Dolby Digital. I swapped in Transformers and found the same. This ended up being an issue with the early PowerDVD 9 build, similar to issues with the version of the player needed for the Auzentech HomeTheater HD. Switching audio output modes a couple of times seemed to fix the problem, I now had both DTS-HD MA and Dolby TrueHD bitstreaming from the Radeon HD 5870 to my receiver.

One strange artifact during my testing was the 5870 apparently delivered 1080i output to my JVC RS2 projector. I'm not exactly sure what went wrong here as 1080p wasn't an issue on any other display I used. I ran out of time before I could figure out the cause of the problem but I expect it's an early compatibility issue.

I can't begin to express how relieving it is to finally have GPUs that implement a protected audio path capable of handling these overly encrypted audio streams. Within a year everything from high end GPUs to chipsets with integrated graphics will have this functionality.

327 Comments

View All Comments

Zool - Sunday, September 27, 2009 - link

The speed of the on chip cache just shows that the external memory bandwith in curent gpus is only to get the data to gpu or recieve the final data from gpu. The raw processing hapenns on chip with those 10 times faster sram cache or else the raw teraflops would vanish.JarredWalton - Sunday, September 27, 2009 - link

If SD had any reading comprehension or understanding of tech, he would realize that what I am saying is:1) Memory bandwidth didn't double - it went up by just 23%

2) Look at the results and performance increased by far more than 23%

3) Ergo, the 4890 is not bandwidth limited in most cases, and there was no need to double the bandwidth.

Would more bandwidth help performance? Almost certainly, as the 5870 is such a high performance part that unlike the 4890 it could use more. Similarly, the 4870X2 has 50% more bandwidth than the 5870, but it's never 50% faster in our tests, so again it's obviously not bandwidth limited.

Was it that hard to understand? Nope, unless you are trying to pretend I put an ATI bias on everything I say. You're trying to start arguments again where there was none.

SiliconDoc - Sunday, September 27, 2009 - link

The 4800 data rate ram is faster vs former 3600 - hence bus width is running FASTER - so your simple conclusions are wrong.When we overlcock the 5870's ram, we get framerate increase - it increases the bandwidth, and up go the numbers.

---

Not like there isn't an argument, because you don't understand tech.

JarredWalton - Sunday, September 27, 2009 - link

The bus is indeed faster -- 4800 effective vs. 3900 on the 4890 or 3600 on the 4870. What's "wrong about my simple conclusions"? You're not wrong, but you're not 100% right if you suggest bandwidth is the only bottleneck.Naturally, as most games are at least partially bandwidth limited, if you overclock 10% you increase performance. The question is, does it increase linearly by 10%? Rarely, just as if you overclock the core 10% you usually don't get 10% boost. If you do get a 1-for-1 increase with overclocking, it indicates you are solely bottlenecked by that aspect of performance.

So my conclusions still stand: the 5870 is more bandwidth limited than 4890, but it is not completely bandwidth limited. Improving the caches will also help the GPU deal with less bandwidth, just as it does on CPUs. As fast as Bloomfield may be with triple-channel DDR3-1066 (25.6GB/s), the CPU can process far more data than RAM could hope to provide. Would a wider/faster bus help the 5870? Yup. Would it be a win-win scenario in terms of cost vs. performance? Apparently ATI didn't think so, and given how quickly sales numbers taper off above $300 for GPUs, I'm inclined to agree.

I'd also wager we're a lot more CPU limited on 5870 than many other GPUs, particularly with CrossFire setups. I wouldn't even look at 5870 CrossFire unless you're running a high-end/overclocked Core i7 or Phenom II (i.e. over ~3.4GHz).

And FWIW: Does any of this mean NVIDIA can't go a different route? Nope. GT300 can use 512-bit interfaces with GDDR5, and they can be faster than 5870. They'll probably cost more if that's the case, but then it's still up to the consumers to decide how much they're willing to spend.

silverblue - Saturday, September 26, 2009 - link

I suppose if we end up seeing a 512-bit card then it'll make for a very interesting comparison with the 5870. With equal clocks during testing, we'd have a far better idea, though I'd expect to see far more RAM on a 512-bit card which may serve to skew the figures and muddy the waters, so to speak.Voo - Friday, September 25, 2009 - link

Hey Jarred I know that's neither the right place nor the right person to ask, but do we get some kind of "Ignore this person" button with the site revamp Anand talked about some months ago?I think I'd prefer this feature about almost everything - even an edit button ;)

JarredWalton - Friday, September 25, 2009 - link

I'll ask and find out. I know that the comments are supposed to receive a nice overhaul, but more than that...? Of course, if you ignore his posts on this (and the responses), you'd only have about five comments! ;-)Voo - Saturday, September 26, 2009 - link

Great!Yep it'd be rather short, but I'd rather have 10 interesting comments than 1000 COMMENTS WRITTEN IN CAPS!!11 with dubious content ;)

SiliconDoc - Wednesday, September 30, 2009 - link

I put it in caps so you could easily avoid them, I was thinking of you and your "problems".I guess since you "knew this wasn't the right time or place" but went ahead anyway, you've got "lot's of problems".

Let me know when you have posted an "interesting comment" with no "dubios nature" to it.

I suspect I'll be waiting years.

MODEL3 - Friday, September 25, 2009 - link

Hi Ryan,Nice new info in your review.

The day you posted your review, i wrote in the forums that according to my perception there are other reasons except bandwidth limitations and driver maturity, that the 850MHz 5870 hasn't doubled its performance in relation with a 850MHz 4890.

Usually when a GPU has 2X the specs of another GPU the performance gain is 2X (of cource i am not talking about games with engines that are CPU limited or engines that seems to scale badly or are poor coded for example)

There are many examples in the past that we had 2X performance gain with 2X the specs. (not in all the games, but in many games)

From the tests that i saw in your review and from my understanding of the AMD slides, i think there are 2 more reasons that 5870 performs like that.

The day of your review i wrote to the forums the additional reasons that i think the 5870 performs like that, but nobody replied me.

I wrote that probably 5870 has:

1.Geometry/vertex performance issues (in the sense that it cannot generate 2X geometry in relation with 4890) (my main assumption)

or/and

2.Geometry/vertex shading performance issues (in the sense that the geometry shader [GS] cannot shade vertex with 2X speed in relation with 4890)(another possible assumption)

I guess there are synthetic benchmarks that have tests like that (pure geometry speed, and pure geometry/vertex shader speed, in addition with the classic pixel shader speed tests) so someone can see if my assumption is true.

If you have the time and you think that this is possible and you feel like it is worth your time, can you check my hypothesis please?

Thanks very much,

MODel3