MultiGPU Update: Does 3-way Make Sense?

by Derek Wilson on February 25, 2009 2:45 PM EST- Posted in

- GPUs

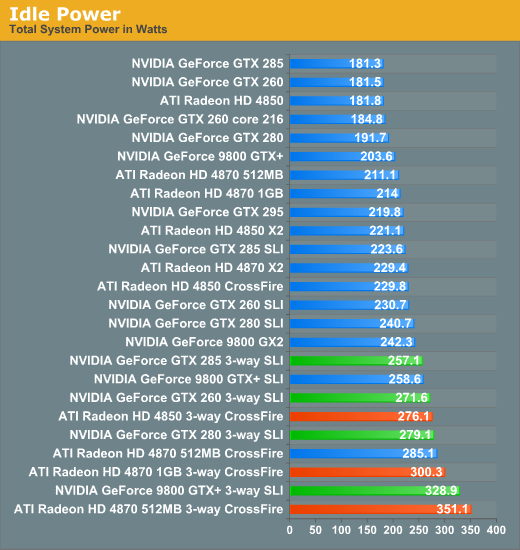

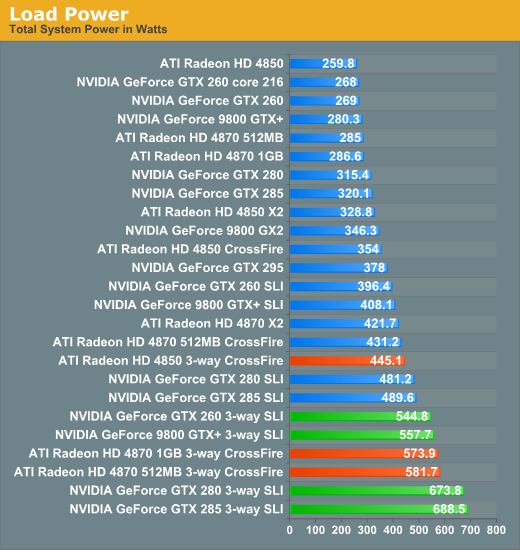

Power Consumption

No surprises here really. 3-way hardware requires more power at idle and load. Remember that these numbers are total system power consumption, but that these numbers are run with the 3dmark Vantage POM shader test. This means the rest of the system isn't pushed very hard at all. Depending on the game, you could expect real load power draw to be 50 to 100W higher.

While NVIDIA hardware seems to handle idle power a bit better, at load 3-way SLI tends to require much more than 3-way CrossFire. But this is in line with what we saw with 2-way solutions as well.

46 Comments

View All Comments

7Enigma - Thursday, February 26, 2009 - link

http://www.anandtech.com/mb/showdoc.aspx?i=3506&am...">http://www.anandtech.com/mb/showdoc.aspx?i=3506&am...Just a quick review (would have preferred to see the upper resolutions tested....wait did I just write that?), but shows that with CF you have a pretty competetive war. I would guess going tri-gpu (if the game supports it well) the i7 would pull ahead by quite a bit, especially when OC'd, but that remains to be seen.

Mr Roboto - Thursday, February 26, 2009 - link

BORING! Not to sound like a total dick but seriously do we need 2 articles (with probably a third to come discussing quad GPU) to find out what a total waste multi GPU is? I mean until NV and ATI invest some serious research into hardware based SLI\XFire I'd never even consider buying two GPU's. The current software based multi GPU setups don't offer enough of a reward. Having to use a software based profile means you'll always be waiting for them to create one for the latest games, while some simply won't get one at all.Software in general is lagging so far behind current hardware that there is practically ZERO use for two GPU's let alone 3-4. Someone please name 10 games that can successfully use more than 2 cores at a time. OK now name 10 software applications that do the same (not counting video encoding apps). It's pathetic how bad it is. Look at all the XBox360 ports built on 5 year old hardware that are coming out in rapid succession and you have a pretty glib picture.

Still waiting for some Lucid Hydra benchmarks showing 100% linear scaling. If it actually materializes on the desktop then I would consider multi GPU.

7Enigma - Thursday, February 26, 2009 - link

I find these articles well done and beneficial. The stereotype that tri/quad/sometimes even dual is a waste is just that a sterotype. For games that are coded well for multi-gpu and when you are on a large display I'm actually a bit impressed with the multi-gpu stats. A year ago you would likely not see even near the improvement from a single card. It's a testament to the drivers of the gpu manufacturers and as importantly the game programmers.What this also shows is games that are poorly suited for multi-gpu (either due to poor coding/drivers or bottlenecked at another point). Sure most of us don't have the 30" display that warrants the tri and upcoming quad articles, but for those that do it is not a waste, and look further down the rabbit hole to see where we will be in another 2 years or so with a single-gpu card.

We have already pretty much approached a saturation point for mainstream graphics gaming (<$300) under 24" (1920X1200). That has to be dangerous for ATI/Nvidia because we are now becoming limited by our display size as opposed to the historical trend of ever demanding games keeping us at pretty much the same resolution year after year (with slow progress from 800X600 to 1024X768 to the now most common 1280X1024, to the quickly becoming gaming standard 22", and finally within a year or two to 24" gaming goodness).

What I imagine you will see in the next year or two is heavy reliance on physics calculations on the gpu to burden them greatly bringing us back to the near always present gpu-limited status. If game makers fail to implement some serious gpu demands above and beyond what is being used today pretty soon we won't need to upgrade video cards near as frequently. And for the consumer that is actually a really good thing...

Pakman333 - Thursday, February 26, 2009 - link

"BORING! Not to sound like a total dick"You do, and you probably are.

If you don't care, then why read it? No one is forcing you to.

poohbear - Thursday, February 26, 2009 - link

props for posting such a quick follow up! sometimes hardware sites promise follow-ups & never deliver.;)Jynx980 - Thursday, February 26, 2009 - link

Nice job on the resolution options for the graphs! This should alleviate frustration on both ends. I hope it's the standard from now on.rcr - Thursday, February 26, 2009 - link

I read some times ago, that the GTX285 will draw less power than a GTX280. But the graph of power consumption is here also made wih 3xGTX280OC.ilkhan - Thursday, February 26, 2009 - link

As a reader I hate going back and forth between pages. Id love to see the price for each option on the right hand side of the performance graphs. Would make it super easy to compare the value of each solution, rather than the seperate graphs you are using here.And on the plus side for 4 way scaling, at least theres only 4 cards to bench (9800GX2, 4850X2, 4870X2, and the GTX295). Maybe a couple more for memory options, but a lot less than the 3-way here.

LiLgerman - Wednesday, February 25, 2009 - link

i currently have a 4870, 512mb TOP from asus and i was wondering if i can pair that with a 1gb model. Will i have to down clock my 4870 TOP to the speed of the 1gb model if i do crossfire?Thanks

DerekWilson - Thursday, February 26, 2009 - link

AMD is good with asynchronous clocking (one can be faster than the other) ... but i believe memory sizes must match -- i know you could still put them in crossfire, but i /think/ that 512MB of the memory on the 1GB card will be disabled to make them work together.this is just what i remember off the top of my head though.