µATX Part 1: ATI Radeon Xpress 1250 Performance Review

by Gary Key on August 28, 2007 7:00 AM EST- Posted in

- Motherboards

Memory Testing

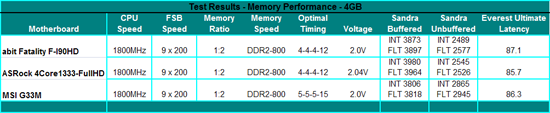

All of our boards were able to run 4GB of OCZ HPC Reaper at DDR2-800 speeds on 2.04V or less. Our optimal timings for the two X1250 boards were 4-4-4-12 while we had to run at 5-5-5-15 on the MSI G33M board. The MSI board did not care for CAS4 settings with 4GB installed but the overall memory results are still very competitive. In fact, the Sandra unbuffered scores are around 12% better than our X1250 boards and in a couple of our application benchmarks that rely on memory throughput and low latencies, this advantage will be apparent.

abit Fatality F-I90HD Memory Tests

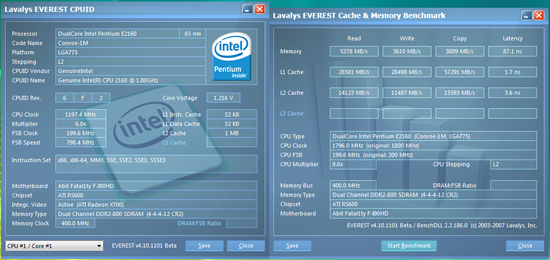

We were able to set our timings to 4-4-4-12 at DDR2-800 by increasing the memory voltage to 2.0V. This offered the best possible latencies and memory throughput on this board since overclocking of the memory is not available in the current BIOS. By increasing the voltage to 2.10V, we were able to improve timings to 4-4-3-10 with 4GB installed, but the differences in benchmark results were non-existent since memory sub-timings were relaxed to compensate for the tighter timings. Up until the 1.4 BIOS release, the board was very picky about booting with memory that did not allow or utilize 1.8V at POST. We could not boot several memory modules from a variety of suppliers that were set to operate with 2.0V+ during normal installation. This problem has greatly decreased with the later BIOS versions and we have booted with 4GB configurations with these same modules.

ASRock 4Core1333-FullHD Memory Tests

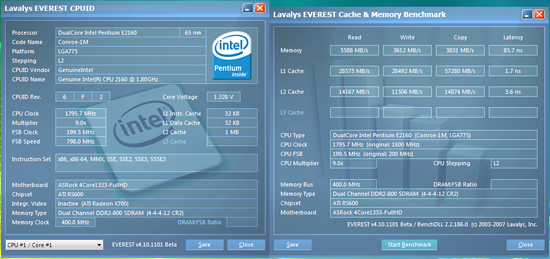

Like our abit board, the optimum settings at DDR2-800 with our OCZ HPC Reaper 4GB memory configuration are 4-4-4-12, although with the additional memory timing options in the BIOS we were able to dial tRFC from 42 on the abit board to 28 on this ASRock board. This change along with generally more aggressive memory sub-timings from ASRock resulted in slightly lower latencies and higher memory throughput in our Everest benchmarks. We had to set our memory voltage to 2.04V to complete our benchmark tests, though the board otherwise booted and ran fine at 1.8V.

ASRock also offers an option to set the memory speed to DDR2-1066 although our memory is not capable of those speeds. We tried a few DDR2-1066 modules from OCZ, Corsair, and G.Skill that worked at 5-5-5-15 timings with 2.04V. Our benchmark results in actual applications did not vary more than 1% with the E2160 so our opinion is that DDR2-800 at CAS4 timings is optimal for this board. Interestingly enough, our memory scores also didn't vary by more than 1% when utilizing an external graphics card.

|

| Click to enlarge |

All of our boards were able to run 4GB of OCZ HPC Reaper at DDR2-800 speeds on 2.04V or less. Our optimal timings for the two X1250 boards were 4-4-4-12 while we had to run at 5-5-5-15 on the MSI G33M board. The MSI board did not care for CAS4 settings with 4GB installed but the overall memory results are still very competitive. In fact, the Sandra unbuffered scores are around 12% better than our X1250 boards and in a couple of our application benchmarks that rely on memory throughput and low latencies, this advantage will be apparent.

abit Fatality F-I90HD Memory Tests

|

| Click to enlarge |

We were able to set our timings to 4-4-4-12 at DDR2-800 by increasing the memory voltage to 2.0V. This offered the best possible latencies and memory throughput on this board since overclocking of the memory is not available in the current BIOS. By increasing the voltage to 2.10V, we were able to improve timings to 4-4-3-10 with 4GB installed, but the differences in benchmark results were non-existent since memory sub-timings were relaxed to compensate for the tighter timings. Up until the 1.4 BIOS release, the board was very picky about booting with memory that did not allow or utilize 1.8V at POST. We could not boot several memory modules from a variety of suppliers that were set to operate with 2.0V+ during normal installation. This problem has greatly decreased with the later BIOS versions and we have booted with 4GB configurations with these same modules.

ASRock 4Core1333-FullHD Memory Tests

|

| Click to enlarge |

Like our abit board, the optimum settings at DDR2-800 with our OCZ HPC Reaper 4GB memory configuration are 4-4-4-12, although with the additional memory timing options in the BIOS we were able to dial tRFC from 42 on the abit board to 28 on this ASRock board. This change along with generally more aggressive memory sub-timings from ASRock resulted in slightly lower latencies and higher memory throughput in our Everest benchmarks. We had to set our memory voltage to 2.04V to complete our benchmark tests, though the board otherwise booted and ran fine at 1.8V.

ASRock also offers an option to set the memory speed to DDR2-1066 although our memory is not capable of those speeds. We tried a few DDR2-1066 modules from OCZ, Corsair, and G.Skill that worked at 5-5-5-15 timings with 2.04V. Our benchmark results in actual applications did not vary more than 1% with the E2160 so our opinion is that DDR2-800 at CAS4 timings is optimal for this board. Interestingly enough, our memory scores also didn't vary by more than 1% when utilizing an external graphics card.

22 Comments

View All Comments

Sargo - Tuesday, August 28, 2007 - link

Nice review but there's no X3100 on Intel G33. http://en.wikipedia.org/wiki/Intel_GMA#GMA_3100">GMA 3100 is based on much older arhitechture. Thus even the new drivers won't help that much.ltcommanderdata - Tuesday, August 28, 2007 - link

Exactly. The G33 was never intended to replace the G965 chipset, it replaces the 945G chipset and the GMA 950. The G33's IGP is not the GMA X3100 but the GMA 3100 (no "X") and the IGP is virtually identical to the GMA 950 but with higher clock speeds and better video support. The GMA 950, GMA 3000, and GMA 3100 all only have SM2.0 pixel shaders with no vertex shaders and no hardware T&L engine. The G965 and the GMA X3000 remains the top Intel IGP until the launch of the G35 and GMA X3500. I can't believe Anandtech made such an obvious mistake, but I have to admit Intel isn't helping matters with there ever expanding portfolio of IGPs.Here's Intel's nice PR chart explaining the different IGPs:

http://download.intel.com/products/graphics/intel_...">http://download.intel.com/products/graphics/intel_...

Could you please run a review with the G965 chipset and the GMA X3100 using XP and the latest 14.31 drivers? They are now out of beta and Intel claims full DX9.0c SM3.0 hardware acceleration. I would love to see the GMA X3000 compared with the common GMA 950 (also supported in the 14.31 drivers although it has no VS to activate), the Xpress X1250, the GeForce 6150 or 7050, and some low-end GPUs like the X1300 or HD 2400. A comparison between the 14.31 and previous 14.29 drivers that had no hardware support would also show how much things have increased.

JarredWalton - Tuesday, August 28, 2007 - link

I did look at gaming performance under Vista with a 965GM chipset in the http://www.anandtech.com/mobile/showdoc.aspx?i=306...">PC Club ENP660 review. However, that was also tested under Vista. I would assume that with drivers working in all respects, GMA X3100 performance would probably come close to that of the Radeon Xpress 1250, but when will the drivers truly reach that point? In the end, IGP is still only sufficient for playing with all the details turned down at 1280x800 or lower resolutions, at least in recent titles. Often it can't even do that, and 800x600 might be required. Want to play games at all? Just spend the $120 on something like an 8600 GT.IntelUser2000 - Wednesday, August 29, 2007 - link

It has the drivers at XP.

JarredWalton - Wednesday, August 29, 2007 - link

Unless the XP drivers are somehow 100% faster (or more) than the last Vista drivers I tried, it still doesn't matter. Minimum details in Battlefield 2 at 800x600 got around 20 FPS. It was sort of playable, but nothing to write home about. Half-Life 2 engine stuff is still totally messed up on the chipset; it runs DX9 mode, but it gets <10 FPS regardless of resolution.IntelUser2000 - Wednesday, August 29, 2007 - link

I get 35-45 fps on the demo Single Player for the first 5 mins at 800x600 min. Didn't check more as its limited.E6600

DG965WH

14.31 production driver

2x1GB DDR2-800

WD360GD Raptor 36GB

WinXP SP2

IntelUser2000 - Tuesday, September 11, 2007 - link

Jarred, PLEASE PROVIDE THE DETAILS OF THE BENCHMARK/SETTINGS/PATCHES used for BF2 so I can provide equal testing as you have done on the Pt.1 article.Like:

-What version of BF2 used

-What demos are supposed to be used

-How do I load up the demos

-etc

R101 - Tuesday, August 28, 2007 - link

Just for the fun of it, for us to see what can X3100 do with these new betas. I've been looking for that test since those drivers came out, and still nothing.erwos - Tuesday, August 28, 2007 - link

I'm looking forward to seeing the benchmarks on the G35 motherboards (which I'm sure won't be in this series). The X3500 really does seem to have a promising feature set, at least on paper.Lonyo - Tuesday, August 28, 2007 - link

Bioshock requires SM3.0.