NVIDIA's GeForce 8800 (G80): GPUs Re-architected for DirectX 10

by Anand Lal Shimpi & Derek Wilson on November 8, 2006 6:01 PM EST- Posted in

- GPUs

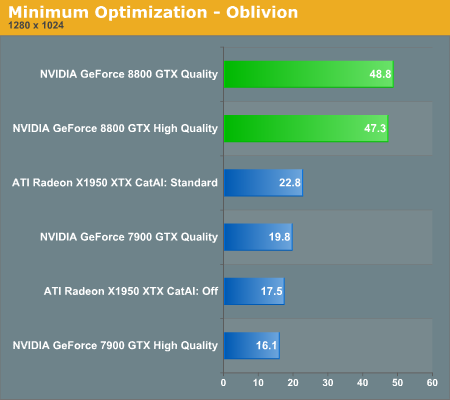

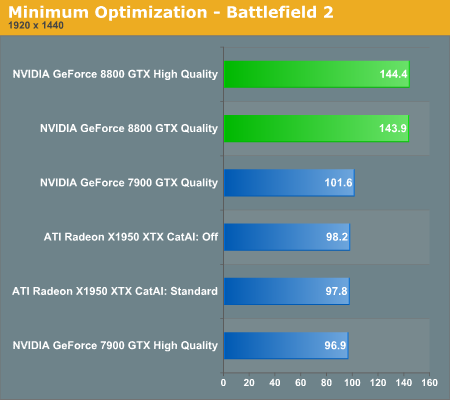

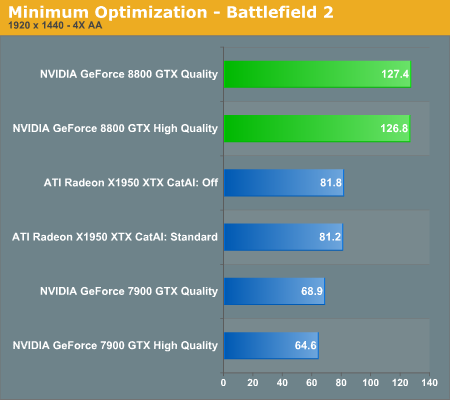

The thing to remember is that, even when all optimizations are disabled, there are other optimizations going on that we can't touch. There always will be. The better these optimizations get, the faster we will be able to render accurate images. Gaining more control over what happens in the hardware is a nice bonus, but disabling optimization for no reason just doesn't make sense. Thus, our tests will be done at default texture filtering quality on NVIDIA hardware. In order to understand the performance impact of High Quality vs. Quality texture filtering on NVIDIA hardware, we ran a few benchmarks with as many optimizations disabled as possible and compared the result to our default quality tests. Here's what we get:

We can clearly see that G70 takes a performance hit from enabling high quality mode, but that G80 is able to take it in stride. While we don't have the ability to specifically disable or enable optimizations in ATI hardware, Catalyst AI is the feature that dictates how much liberty ATI is able to take with a game, from filtering optimizations all the way to shader replacement. We can't tell if the difference we see in Oblivion is due to shader replacement, filtering, or some other optimization under R580.

111 Comments

View All Comments

JarredWalton - Wednesday, November 8, 2006 - link

The text is basically complete, and minor spelling issues aren't going to change the results. Obviously, proofing 29 pages of article content is going to take some time. We felt our readers would be a lot more interested in getting the content now rather than waiting even longer for me to proof everything. I know the vast majority of readers don't bother to comment on spelling and grammar issues, but my post was to avoid the comments section turning into a bunch of short posts complaining about errors that will be corrected shortly. :)Iger - Wednesday, November 8, 2006 - link

Pff, of course we would! If I would like to read a novel I would find a book! Results first - proofing later... if ever :) Thanks for the article!JarredWalton - Wednesday, November 8, 2006 - link

Did I say an hour? Okay, how about I just post here when I'm done reading/editing? :)JarredWalton - Wednesday, November 8, 2006 - link

Okay, I'm done proofing/editing. If you still see errors, feel free to complain. Like I said, though, try to keep them in this thread.--Jarred

LuxFestinus - Thursday, November 9, 2006 - link

Pg. 3 under <b>Unified Shaders</b>Should read as follows:

<i>Until now, building a GPU with unified shaders would not have <b>been</b> desirable, let alone practical, but Shader Model 4.0 lends itself well to this approach.</i>

Good try though.;)

shabby - Wednesday, November 8, 2006 - link

$600 for the gtx and $450 for the gts is pretty good seeing how much they crammed into the gpu, makes you wonder why the previous gen topped 650 bucks at times.dcalfine - Wednesday, November 8, 2006 - link

How does the 8800GTX compare to the 7950GX2? Not just in FPS, but also in performance/watt?dcalfine - Wednesday, November 8, 2006 - link

Ignore ^^^sorry

Hot card by the way!

neogodless - Wednesday, November 8, 2006 - link

I know you touched on this, but I assume that DirectX 10 is still not available for your testing platform, Windows XP Professional SP2, and additionally no games have been released for that platform. Is this correct? If so...Will DirectX 10 be made available for Windows XP?

Will you publish a new review once Vista, DirectX 10 and the new games are available?

Can we peak into the future at all now?

JarredWalton - Wednesday, November 8, 2006 - link

DX10 will be Vista only according to Microsoft. What that means according to some game developers is that DX10 support is going to be somewhat slow, and it's also going to be a major headache because for the next 3-4 years they will pretty much be required to have a DX9 rendering solution along with DX10.